From Smarter Models to Self-Improving Agents

Anthropic’s biggest shift in 2026 isn’t a new model — it’s what happens between sessions. On May 6, the company shipped three new features for Claude Managed Agents: dreaming, outcomes, and multiagent orchestration. Combined with the April 16 release of Claude Opus 4.7, these updates sketch a clear picture of where Anthropic is steering its platform: away from one-shot prompts and toward agents that get better the longer you use them.

That’s a meaningful architectural bet, and it deserves scrutiny beyond the launch blog post.

Claude Opus 4.7: The Benchmark Gains Are Real

Opus 4.7 landed with measurable improvements on the metrics that matter most for coding agents. On SWE-bench Verified — the standard for autonomous issue resolution — it jumped from 80.8% to 87.6%, a gain of 6.8 percentage points over Opus 4.6. On the harder SWE-bench Pro, it moved from 53.4% to 64.3%, overtaking GPT-5.4 (57.7%) and Gemini’s best coding model (54.2%). For teams where code generation quality directly affects delivery throughput, those aren’t cosmetic improvements.

The vision upgrade is substantial. Opus 4.7 processes images up to 2,576 pixels on the long edge — roughly 3.75 megapixels — more than 3.3x the resolution supported by prior Claude models. This matters for agents that need to parse screenshots, architectural diagrams, or documentation rendered as images. The resolution improvement arrives at the same price point: $5 per million input tokens and $25 per million output tokens.

Two other changes are worth noting. A new reasoning depth option called xhigh sits between the existing high and max settings, giving developers finer control over latency versus thoroughness. And a new tokenizer generates up to 35% more tokens for the same input text — which affects costs in complex, code-heavy conversations where prompt efficiency matters.

What “Dreaming” Actually Means

Dreaming is the most conceptually interesting of the three May features, and also the one with the most caveats. Currently in research preview, it’s a scheduled process that reviews an agent’s past sessions and memory stores, extracts recurring patterns, and curates memories so the agent improves over time without manual retraining.

The analogy to human memory consolidation during sleep is intentional — Anthropic is leaning into it — but the mechanism is more mundane: a background job reviews session logs and writes updated entries to a persistent memory store. What makes it non-trivial is scale: dreaming surfaces patterns that span many sessions and users, including recurring mistakes, effective workarounds, and preferences shared across a team. A single agent session can’t observe these patterns. A dreaming run across hundreds of sessions can.

Harvey, the legal AI company, offers the most concrete evidence. In internal tests, their Claude Managed Agent went from resolving a fraction of complex legal drafting tasks to approximately 6x the completion rate after dreaming was enabled. The explanation is practical: the agent had accumulated knowledge about filetype workarounds and tool-specific behaviors that previously had to be re-learned each session. Dreaming consolidated those learnings into durable memory.

The important caveat: dreaming is still a research preview. Anthropic hasn’t published a formal evaluation of how it performs across different domains, how memory quality degrades over time, or what happens when agents learn incorrect patterns. For now, it’s a compelling capability with limited external validation.

Outcomes: A Separate Grader in Its Own Context

Outcomes addresses a persistent weakness in agent pipelines: self-evaluation is unreliable. When an agent checks its own output, it’s influenced by the same reasoning chain that produced the output. Outcomes solves this by running a separate grader in an independent context window. You provide a rubric describing what success looks like; the grader evaluates the output against it without seeing the agent’s reasoning; and if the output doesn’t meet criteria, the grader provides specific feedback for another pass.

Anthropic’s internal benchmarks report up to 10 percentage points of improvement in task success over standard prompting loops, with the largest gains on the hardest problems. On file generation specifically, .docx quality improved by 8.4% and .pptx by 10.1%. These numbers are self-reported, so treat them as directional rather than definitive — but the mechanism is sound, and the gains are consistent with what you’d expect from independent evaluation loops.

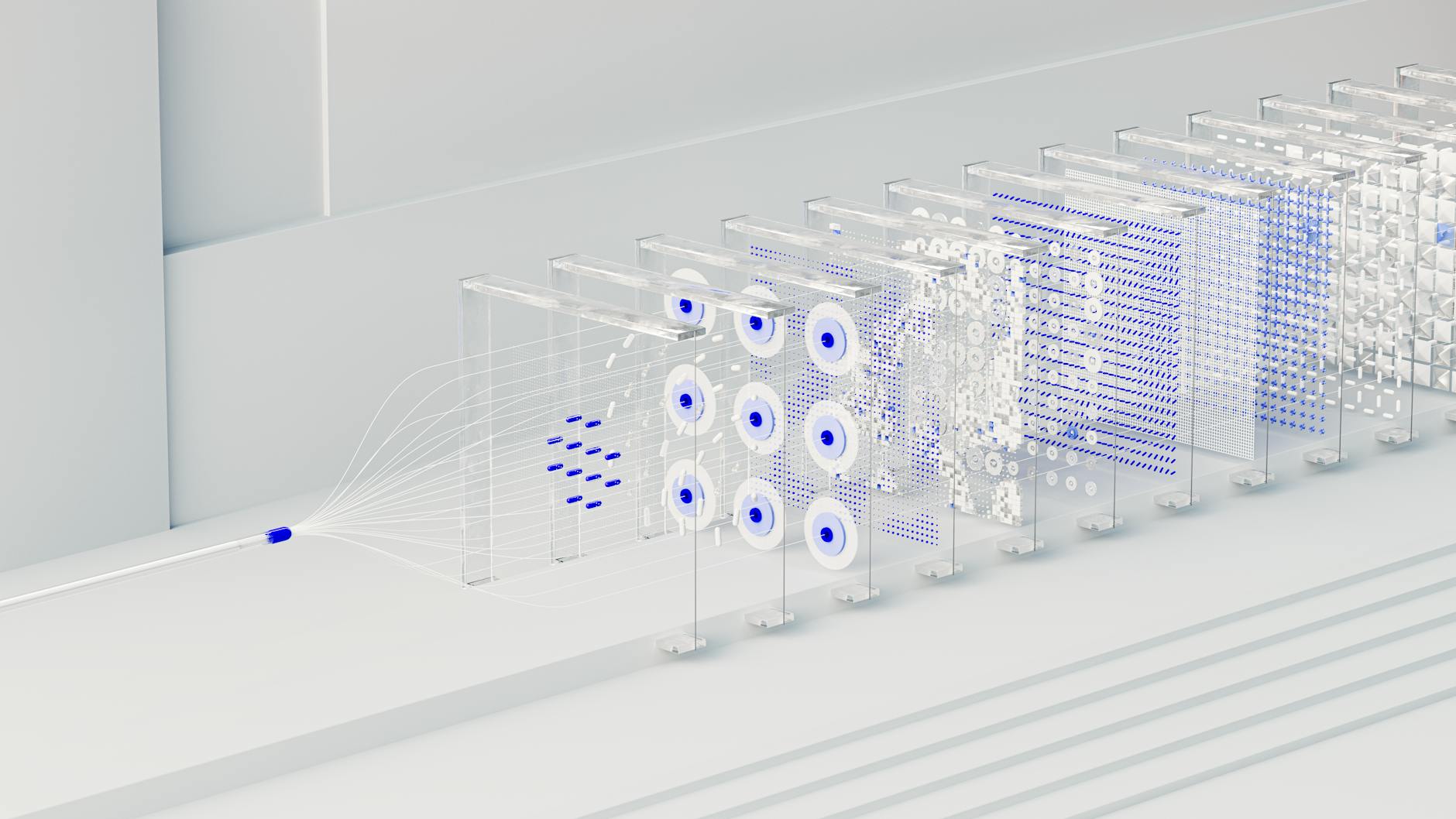

Multiagent Orchestration: Parallelism With a Chain of Command

When a task is too complex for a single agent — or when parallel execution would meaningfully reduce latency — multiagent orchestration lets a lead agent decompose the job and delegate subtasks to specialist agents, each with its own model, prompt, and tool access. These specialists write to a shared filesystem and contribute to the lead agent’s context.

This isn’t a new pattern in AI engineering — it mirrors designs teams have built manually with LangGraph, CrewAI, and similar frameworks. What Anthropic is offering is a managed version: the orchestration infrastructure is handled for you, the shared state is persisted automatically, and specialists can run in parallel without manual coordination code. For teams that don’t want to build and maintain their own multi-agent scaffolding, that’s a real reduction in operational overhead.

The Bigger Picture: Agents That Compound

Taken together, these features represent Anthropic’s answer to a genuine limitation of current AI tooling: agents don’t accumulate value over time. Each session starts fresh. Dreaming is an attempt to change that — to make agents that genuinely learn from deployment rather than just from pretraining. Outcomes and orchestration address the reliability and parallelism problems that prevent agents from handling long-horizon, multi-step work in production.

The unresolved question is how this interacts with the absorption capacity problem documented across enterprise AI deployments in 2026: most teams can’t yet change their workflows fast enough to absorb what agents produce, let alone what agents that self-improve over time would produce. Better agents don’t automatically translate to better delivery — as the DORA data from earlier this year showed, the bottleneck is rarely the model.

Still, the dreaming architecture is a meaningful step. If Anthropic can demonstrate that dreaming generalizes beyond Harvey’s legal use case — and publishes the evaluation methodology — it would be one of the more durable improvements to agentic AI this year.

Further Reading

- New in Claude Managed Agents: dreaming, outcomes, and multiagent orchestration — Anthropic’s official announcement with technical detail on all three features

- VentureBeat: Anthropic introduces “dreaming” — a solid third-party breakdown of the mechanism and its limitations

- Claude Opus 4.7 Benchmarks Explained — detailed breakdown of the SWE-bench numbers and what they mean for real coding workflows